Ancient Times Early Man relied on counting on his fingers and toes (which by the way, is the

basis for our base 10 numbering system). He also used sticks and stones as markers.

Later notched sticks and knotted cords were used for counting. Finally came symbols

written on hides, parchment, and later paper. Man invents the concept of number,

then invents devices to help keep up with the numbers of his possessions.

Early Man relied on counting on his fingers and toes (which by the way, is the

basis for our base 10 numbering system). He also used sticks and stones as markers.

Later notched sticks and knotted cords were used for counting. Finally came symbols

written on hides, parchment, and later paper. Man invents the concept of number,

then invents devices to help keep up with the numbers of his possessions.

|

Roman Empire

The ancient Romans developed an Abacus, the first "machine" for calculating. While it predates the Chinese abacus we do not know if it was the ancestor of that Abacus. Counters in the lower groove are 1 x 10n, those in the upper groove are 5 x 10n |

Industrial Age - 1600

John Napier, a Scottish nobleman and politician devoted much of his leisure time to the study of mathematics. He was especially interested in devising ways to aid computations. His greatest contribution was the invention of logarithms. He inscribed logarithmic measurements on a set of 10 wooden rods and thus was able to do multiplication and division by matching up numbers on the rods. These became known as Napier’s Bones. |

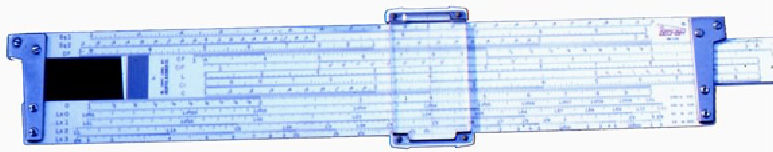

1621 - The Sliderule

Napier invented logarithms, Edmund Gunter invented the logarithmic scales (lines etched on metal or wood), but it was William Oughtred, in England who invented the sliderule. Using the concept of Napier’s bones, he inscribed logarithms on strips of wood and invented the calculating "machine" which was used up until the mid-1970s when the first hand-held calculators and microcomputers appeared.  |

1642 - Blaise Pascal(1623-1662)

Blaise Pascal, a French mathematical genius, at the age of 19 invented a machine, which he called the Pascaline that could do addition and subtraction to help his father, who was also a mathematician. Pascal’s machine consisted of a series of gears with 10 teeth each, representing the numbers 0 to 9. As each gear made one turn it would trip the next gear up to make 1/10 of a revolution. This principle remained the foundation of all mechanical adding machines for centuries after his death. The Pascal programming language was named in his honor. |

1673 - Gottfried Wilhelm von Leibniz (1646-1716)

Gottfried Wilhelm von Leibniz invented differential and integral calculus independently

of Sir Isaac Newton, who is usually given sole credit. He invented a calculating machine

known as Leibniz’s Wheel or the Step Reckoner.

It could add and subtract, like Pascal’s machine, but it could also multiply and divide.

It did this by repeated additions or subtractions, the way mechanical adding machines of

the mid to late 20th century did. Leibniz also invented something essential to modern

computers — binary arithmetic.

Gottfried Wilhelm von Leibniz invented differential and integral calculus independently

of Sir Isaac Newton, who is usually given sole credit. He invented a calculating machine

known as Leibniz’s Wheel or the Step Reckoner.

It could add and subtract, like Pascal’s machine, but it could also multiply and divide.

It did this by repeated additions or subtractions, the way mechanical adding machines of

the mid to late 20th century did. Leibniz also invented something essential to modern

computers — binary arithmetic.

|

|

1725 - The Bouchon Loom  Basile Bouchon, the son of an organ maker, worked in the textile industry. At this time

fabrics with very intricate patterns woven into them were very much in vogue. To weave

a complex pattern, however involved somewhat complicated manipulations of the threads in

a loom which frequently became tangled, broken, or out of place.

Bouchon observed the paper rolls with punched holes that his father made to program his

player organs and adapted the idea as a way of "programming" a loom. The paper passed

over a section of the loom and where the holes appeared certain threads were lifted.

As a result, the pattern could be woven repeatedly. This was the first punched paper,

stored program. Unfortunately the paper tore and was hard to advance. So, Bouchon’s

loom never really caught on and eventually ended up in the back room collecting dust.

Basile Bouchon, the son of an organ maker, worked in the textile industry. At this time

fabrics with very intricate patterns woven into them were very much in vogue. To weave

a complex pattern, however involved somewhat complicated manipulations of the threads in

a loom which frequently became tangled, broken, or out of place.

Bouchon observed the paper rolls with punched holes that his father made to program his

player organs and adapted the idea as a way of "programming" a loom. The paper passed

over a section of the loom and where the holes appeared certain threads were lifted.

As a result, the pattern could be woven repeatedly. This was the first punched paper,

stored program. Unfortunately the paper tore and was hard to advance. So, Bouchon’s

loom never really caught on and eventually ended up in the back room collecting dust.

|

|

1728 - Falçon Loom In 1728 Jean-Batist Falçon, substituted a deck of punched cardboard cards for the paper roll of Bouchon’s loom. This was much more durable, but the deck of cards tended to get shuffled and it was tedious to continuously switch cards. So, Falçon’s loom ended up collecting dust next to Bouchon’s loom. |

1745 - Joseph Marie Jacquard (1752-1834)

It took inventor Joseph M. Jacquard to bring together Bouchon’s idea of a continuous

punched roll, and Falcon’s ides of durable punched cards to produce a really workable

programmable loom. Weaving operations were controlled by punched cards tied together

to form a long loop. And, you could add as many cards as you wanted. Each time a

thread was woven in, the roll was clicked forward by one card. The results

revolutionized the weaving industry and made a lot of money for Jacquard.

This idea of punched data storage was later adapted for computer data input.

It took inventor Joseph M. Jacquard to bring together Bouchon’s idea of a continuous

punched roll, and Falcon’s ides of durable punched cards to produce a really workable

programmable loom. Weaving operations were controlled by punched cards tied together

to form a long loop. And, you could add as many cards as you wanted. Each time a

thread was woven in, the roll was clicked forward by one card. The results

revolutionized the weaving industry and made a lot of money for Jacquard.

This idea of punched data storage was later adapted for computer data input.

|

1822 – Charles Babbage (1791-1871) and Ada Augusta, The Countess of Lovelace

Charles Babbage is known as the Father of the modern computer (even though none of

his computers worked or were even constructed in their entirety). He first designed

plans to build, what he called the Automatic Difference Engine. It

was designed to help in the construction of mathematical tables for navigation.

Unfortunately, engineering limitations of his time made it impossible for the

computer to be built. His next project was much

more ambitious.

Charles Babbage is known as the Father of the modern computer (even though none of

his computers worked or were even constructed in their entirety). He first designed

plans to build, what he called the Automatic Difference Engine. It

was designed to help in the construction of mathematical tables for navigation.

Unfortunately, engineering limitations of his time made it impossible for the

computer to be built. His next project was much

more ambitious.

While a professor of mathematics at Cambridge University (where Stephen Hawkin is now),

a position he never actually occupied, he proposed the construction of a machine he called

the Analytic Engine. It was to have a punched card input, a memory unit

(called the store), an arithmetic unit (called the mill),

automatic printout, sequential program control, and 20-place decimal accuracy.

He had actually worked out a plan for a computer 100 years ahead of its time.

Unfortunately it was never completed. It had to wait for manufacturing technology to

catch up to his ideas.

While a professor of mathematics at Cambridge University (where Stephen Hawkin is now),

a position he never actually occupied, he proposed the construction of a machine he called

the Analytic Engine. It was to have a punched card input, a memory unit

(called the store), an arithmetic unit (called the mill),

automatic printout, sequential program control, and 20-place decimal accuracy.

He had actually worked out a plan for a computer 100 years ahead of its time.

Unfortunately it was never completed. It had to wait for manufacturing technology to

catch up to his ideas.

During a nine-month period in 1842-1843, Ada Lovelace translated Italian mathematician Luigi Menabrea's memoir on Charles Babbage's Analytic Engine. With her translation she appended a set of notes which specified in complete detail a method for calculating Bernoulli numbers with the Engine. Historians now recognize this as the world's first computer program and honor her as the first programmer. Too bad she has such an ill-received programming language named after her. |

1880s – Herman Hollerith (1860-1929)

The computer trail next takes us to, of all places, the U.S. Bureau of Census. In 1880

taking the U.S. census proved to be a monumental task. By the time it was completed it

was almost time to start over for the 1890 census. To try to overcome this problem the

Census Bureau hired Dr. Herman Hollerith. In 1887, using Jacquard’s idea of the punched

card data storage, Hollerith developed a punched card tabulating system, which allowed

the census takers to record all the information needed on punched cards which were then

placed in a special tabulating machine with a series of counters. When a lever was pulled

a number of pins came down on the card. Where there was a hole the pin went through the

card and made contact with a tiny pool of mercury below and tripped one of the counters by

one. With Hollerith’s machine the 1890 census tabulation was completed in 1/8 the time.

And they checked the count twice.

The computer trail next takes us to, of all places, the U.S. Bureau of Census. In 1880

taking the U.S. census proved to be a monumental task. By the time it was completed it

was almost time to start over for the 1890 census. To try to overcome this problem the

Census Bureau hired Dr. Herman Hollerith. In 1887, using Jacquard’s idea of the punched

card data storage, Hollerith developed a punched card tabulating system, which allowed

the census takers to record all the information needed on punched cards which were then

placed in a special tabulating machine with a series of counters. When a lever was pulled

a number of pins came down on the card. Where there was a hole the pin went through the

card and made contact with a tiny pool of mercury below and tripped one of the counters by

one. With Hollerith’s machine the 1890 census tabulation was completed in 1/8 the time.

And they checked the count twice.

After the census Hollerith turned to using his tabulating machines for business and in 1896 organized the Tabulating Machine Company which later merged with other companies to become IBM. His contribution to the computer then is the use of punched card data storage. BTW: The punched cards in computers were made the same size as those of Hollerith’s machine. And, Hollerith chose the size he did because that was the same size as the one dollar bill at that time and therefore he could find plenty of boxes just the right size to hold the cards. |

1939-1942 Dr. John Vincent Atanasoff(1903-1995) and Clifford Berry (1918-1963)

Dr. John Vincent Atanasoff and his graduate assistant, Clifford Barry, built the first truly

electronic computer, called the Atanasoff-Berry Computer or ABC. Atanasoff said the idea

came to him as he was sitting in a small roadside tavern in Illinois. This computer used a

circuit with 45 vacuum tubes to perform the calculations, and capacitors for storage. This

was also the first computer to use binary math.

Dr. John Vincent Atanasoff and his graduate assistant, Clifford Barry, built the first truly

electronic computer, called the Atanasoff-Berry Computer or ABC. Atanasoff said the idea

came to him as he was sitting in a small roadside tavern in Illinois. This computer used a

circuit with 45 vacuum tubes to perform the calculations, and capacitors for storage. This

was also the first computer to use binary math.

|

|

1943 – Colossus I

The first really successful electronic computer was built in Bletchley Park, England. It was capable of performing only one function, that of code breaking during World War II. It could not be re-programmed. |

|

1944 – Mark I - Howard Aiken (1900-1973) and Grace Hopper (1906-1992)

In 1944 Dr. Howard Aiken of Harvard finished the construction of the Automatic Sequence

Controlled Calculator, popularly known as the Mark I. It contained over 3000 mechanical relays

and was the first electro-mechanical computer capable of making logical decisions, like

if x==3 then do this not like If its raining outside I need to carry

an umbrella. It could perform an addition in 3/10 of a second. Compare that with

something on the order of a couple of nano-seconds (billionths of a second) today.

In 1944 Dr. Howard Aiken of Harvard finished the construction of the Automatic Sequence

Controlled Calculator, popularly known as the Mark I. It contained over 3000 mechanical relays

and was the first electro-mechanical computer capable of making logical decisions, like

if x==3 then do this not like If its raining outside I need to carry

an umbrella. It could perform an addition in 3/10 of a second. Compare that with

something on the order of a couple of nano-seconds (billionths of a second) today.

The important contribution of this machine was that it was programmed by means of a punched paper tape, and the instructions could be altered. In many ways, the Mark I was the realization of Babbage’s dream.

One of the primary programmers for the Mark I was Grace Hopper. One day the Mark I was

malfunctioning and not reading its paper tape input correctly. Ms Hopper checked out the

reader and found a dead moth in the mechanism with its wings blocking the reading of the

holes in the paper tape. She removed the moth, taped it into her log book, and

recorded... Relay #70 Panel F (moth) in relay. First actual case of bug being found.

One of the primary programmers for the Mark I was Grace Hopper. One day the Mark I was

malfunctioning and not reading its paper tape input correctly. Ms Hopper checked out the

reader and found a dead moth in the mechanism with its wings blocking the reading of the

holes in the paper tape. She removed the moth, taped it into her log book, and

recorded... Relay #70 Panel F (moth) in relay. First actual case of bug being found.

She had debugged the program, and while the word bug had been used to describe defects since at least 1889, she is credited with coining the word debugging to describe the work of eliminating program errors. It was Howard Aiken, in 1947, who made the rather short-sighted comment to the effect that the computer is a wonderful machine, but I can see that six such machines would be enough to satisfy all the computing needs of the entire United States. |

1946 – ENIAC - J. Prosper Eckert (1919-1995) and John W. Mauchly (1907-1980)

The first all electronic computer was the Electrical Numerical Integrator and Calculator, known as ENIAC. It was designed by J. Prosper Eckert and John W. Mauchly of the Moore School of Engineering at the University of Pennsylvania. ENIAC was the first multipurpose electronic computer, though very difficult to re-program. It was primarily used to computer aircraft courses, shell trajectories, and to break codes during World War II.

ENIAC occupied a 20 x 40 foot room and used 18,000 vacuum tubes. ENIAC also could never be turned off. If it was it blew too many tubes when turned back on. It had a very limited storage capacity and it was programmed by jumper wires plugged into a large board. |

|

1948 – The Transister

In 1948 an event occurred that was to forever change the course of computers and

electronics. Working at Bell Labs three scientists, John Bordeen (1908-1991) (left),

Waltar Brattain (1902-1987) (right), and William Shockly (1910-1989) (seated) invented

the transistor.

In 1948 an event occurred that was to forever change the course of computers and

electronics. Working at Bell Labs three scientists, John Bordeen (1908-1991) (left),

Waltar Brattain (1902-1987) (right), and William Shockly (1910-1989) (seated) invented

the transistor.The change over from vacuum tube circuits to transistor circuits occurred between 1956 and 1959. This brought in the second generation of computers, those based on transisters. The first generation was mechanical and vacuum tube computers. |

|

1951 – UNIVAC

The first practical electronic computer was built by Eckert and Mauchly (of ENIAC fame) and was known as UNIVAC (UNIVersal Automatic Computer). The first UNIVAC was used by the Bureau of Census. The unique feature of the UNIVAC was that it was not a one-of-a-kind computer. It was mass produced. |

|

1954 – IBM 650

In 1954 the first electronic computer for business was installed at General Electric Appliance Park in Louisville, Kentucky. This year also saw the beginning of operation of the IBM 650 in Boston. This comparatively inexpensive computer gave IBM the lead in the computer market. Over 1000 650s were sold. |

1957-59 – IBM 704

From 1957-1959 the IBM 704 computer appeared, for which the Fortran language was developed. At this time the state of the art in computers allowed 1 component per chip, that is individual transistors. |

|

1958 - 1962 – Programming languages From 1958-1962 many programming languages were developed. FORTRAN (FORmula TRANslator) COBOL (COmmon Business Oriented Language) LISP (LISt Processor) ALGOL (ALGOrithmic Language) BASIC (Beginners All-purpose Symbolic Instruction Code) |

1964 – IBM System/360

In 1964 the beginning of the third-generation computers came with the introduction of the IBM System/360. Thanks to the new hybrid circuits (that gross looking orange thing in the circuit board on the right), the state of the art in computer technology allowed for 10 components per chip. |

|

1965 - PDP-8

In 1965 the first integrated circuit computer, the PDP-8 from Digital Equipment Corporation appeared. (PDP stands for Programmable Data Processor) After this the real revolution in computer cost and size began. |

|

1970 - Integrated Circuits

By the early 70s the state of the art in computer technology allowed for 1000 components per chip. To get an idea of just how much the size of electronic components had shrunk by this time look at the image on the right. The woman is peering through a microscope at a 16K RAM memory integrated circuit. The stand she has her microscopy sitting on is a 16K vacuum tube memory curcuit from about 20 years previous. |

1971

The Intel corporation produced the first microprocessor chip which was a 4-bit chip. Today’s chips are 64-bit. At approximately 1/16 x 1/8 inches in size, this chip contained 250 transistors and had all the computing power of ENIAC. It matched IBM computers of the early 60s that had a CPU the size of an office desk. |

|

1975 – Altair 8800

The January 1975 issue of Popular Electronics carried an article, the first, to describe the Altair 8800, the first low-cost microprocessor computer which had just became commercially available. |

|

Late 1970s to early 1980s – The Microcomputer Explosion

During this period many companies appeared and disappeared, manufacturing a variety of microcomputers (they were called micro to distinguish them from the mainframes which some people referred to as real computers). There was Radio Shack’s TRS-80, the Commodore 64, the Atari, but... |

1977 - The Apple II

The most successful of the early microcomputers was the Apple II, designed and built by Steve Wozniak. With fellow computer whiz and business savvy friend, Steve Jobs, they started Apple Computer in 1977 in Woz’s garage. Less than three years later the company earned over $100 million. Not bad for a couple of college dropout computer geeks. Click here to see an interesting article from the March 2016 issue of Smithsonian Magazine about Woz and the Apple I. |

|

1981  In 1981, IBM produced their first microcomputer. Then the clones started to appear.

This microcomputer explosion fulfilled its slogan computers by the millions

for the millions.

Compared to ENIAC, microcomputers of the early 80s:

In 1981, IBM produced their first microcomputer. Then the clones started to appear.

This microcomputer explosion fulfilled its slogan computers by the millions

for the millions.

Compared to ENIAC, microcomputers of the early 80s:Were 20 times faster (Apple II ran at the speed of Ľ Megahertz). Had a memory capacity as much as 16 times larger (Apple had 64 K). Were thousands of times more reliable. Consumed the power of a light bulb instead of a locomotive. Were 1/30,000 the size. Cost 1/10,000 as much in comparable dollars (An Apple II with full 64 K of RAM cost $1200 in 1979. That’s the equivalent of about $8000 to $10000 in today's dollars) |

|

1984-1989

In 1984 the Macintosh was introduced. This was the first mass-produced, commercially-available computer with a Graphical User Interface. In 1989 Windows 1.0 was introduced for the PC. It was sort of Mac-like but greatly inferior. Macintosh owners were know to refer to it sarcastically as AGAM-84 Almost as Good As Macintosh 84. |

|

1990s Compared to ENIAC, microcomputers of the 90s: Were 36,000 times faster (450 Megahertz was the average speed) Had a memory capacity 1000 to 5000 times larger (average was between 4 and 20 Megabytes) Were 1/30,000 the size Cost 1/30,000 as much in comparable dollars (A PC still cost around $1500 the equivalent of about $2500 in 2008 dollars) |

|

Early 2000s Compared to ENIAC, microcomputers of the early 2000s: Are 180,000 times faster (2.5+ Gigahertz is the average speed) Have a memory capacity 25,000 times larger (average 1+ Gigabytes of RAM) Are 1/30,000 the size Cost 1/60,000 as much in comparable dollars (A PC can cost from $700 to $1500) |

|

Data Storage Data storage has also grown in capacity and shrunk in size as dramatically as have computers. Today a single data DVD will hold around 4.8 gigabytes. It would take 90,000,000 punch cards to hold the same amount of data. And, there is talk of a new high density video disk (HVD) that will be able to hold fifty times that much data. That's more than 240 gigabytes.

|

|

Just how much data is that 8 bits = 1 byte 1024 bytes = 1 kilobyte 1024 K = 1 Megabyte = 1,048,576 bytes 1024 Mb = 1 Gigabyte = 10,73,741,824 bytes 1024 Gb = 1 Terabyte = 1,099,511,627,776 bytes 1024 Tb = 1 Petabyte = 1,125,899,906,842,624 bytes 1024 Pb = 1 Exabyte = 1,152,921,504,606,846,976 bytes 1024 Eb = 1 Zettabyte = 1,180,591,620,717,411,303,424 bytes 1024 Zb = 1 Yottabyte = 1,208,925,819,614,629,174,706,176 bytes By comparison 1K is approximately the memory needed to store one single spaced typed page. |

|